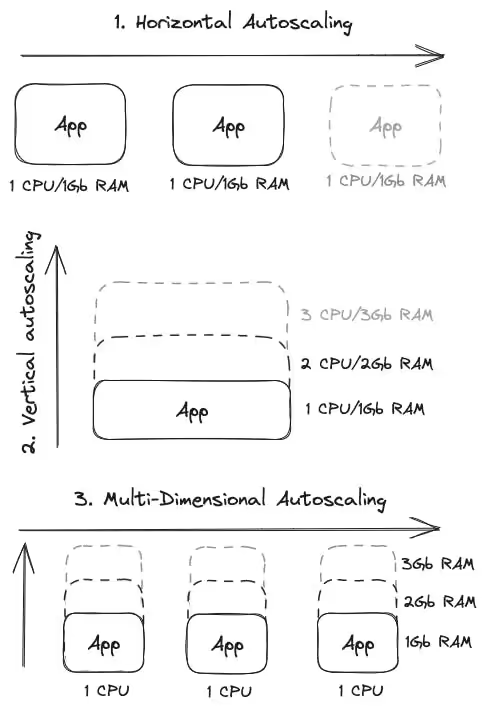

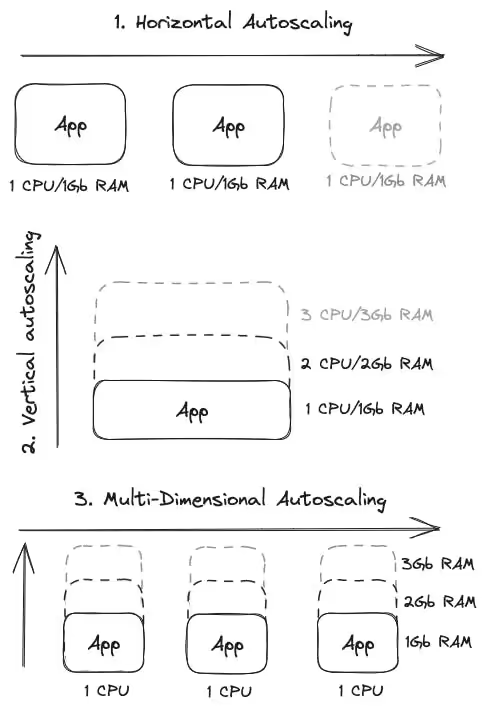

Let’s start with a simple schema explaining what type of app auto-scaling exists today:

Application autoscaling is a considerable subject. At first, it looks simple because everyone understands the goal and how conceptually it works, but it’s not that simple in practice.

Let’s start with a simple schema explaining what type of app auto-scaling exists today:

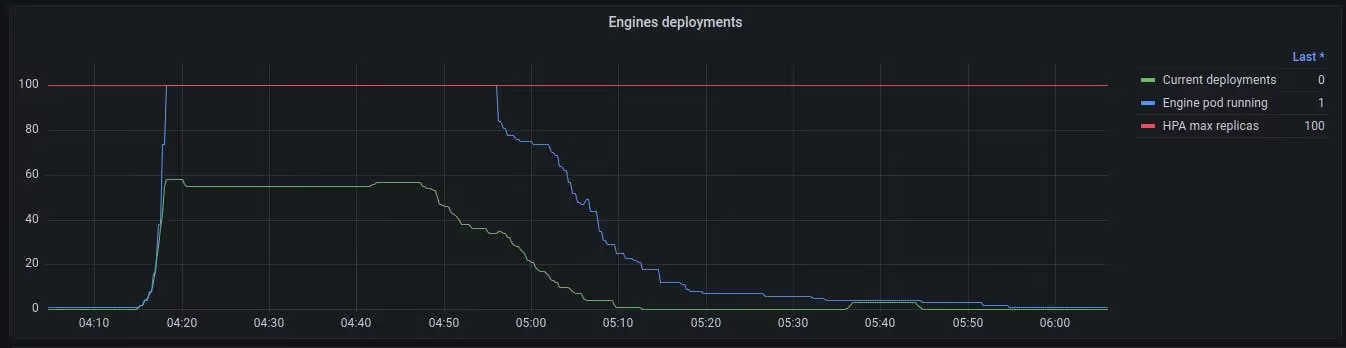

At Qovery, we’re using horizontal and vertical autoscaling on a daily basis for our production at different levels, and the result is excellent when the tuning is made after days/weeks of statistics, analysis, and configuration.

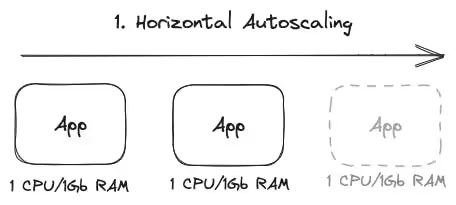

Horizontal autoscaling is scaling up an application by adding more app instances to distribute the workload across all instances, allowing for increased capacity and improved performance.

Horizontal scaling refers to automatically adding or removing instances based on predefined rules or metrics. When the workload increases, additional instances are dynamically provisioned to handle the increased demand. Conversely, excess instances are automatically terminated when the workload decreases to optimize resource utilization and cost.

Horizontal autoscaling offers several benefits, including:

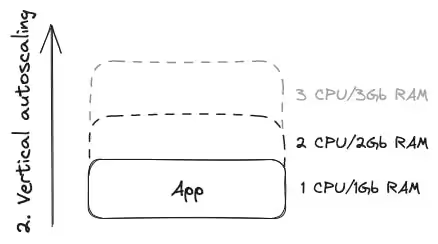

Vertical autoscaling is a way to make your application more resource-autonomous by upgrading the resources of a single application instead of adding more machines (and scaling horizontally). It's like boosting your computer by increasing its CPU power, memory, storage, or network capacity.

With vertical autoscaling, you can improve your application's performance without the need to manage many instances. It simplifies administration and reduces the complexity of handling a distributed system.

However, there's a maximum limit to how much you can upgrade an instance before hitting hardware constraints. Also, scaling up or down vertically may require restarting or reconfiguring the machine, resulting in temporary downtime or disruption.

Vertical autoscaling is commonly used in traditional setups or when the workload can't be easily distributed across multiple instances. It's handy for applications that require a lot of computational power, memory, or specialized hardware configurations.

Although horizontal autoscaling has gained popularity with cloud computing and containers, vertical autoscaling still plays a role in optimizing the performance and resource utilization of individual instances in specific situations.

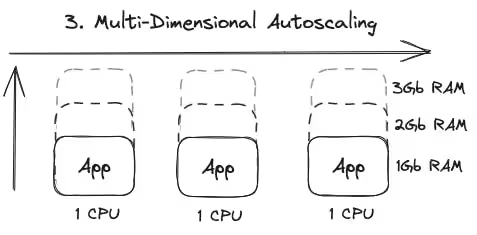

Multidimensional autoscaling is like having a super-smart system that automatically adjusts the resources of your application or system in multiple dimensions to handle changing demands. It's all about ensuring your application has the right power and capacity when needed.

Think of it as a dynamic team of helpers that can scale up or down in terms of the number of instances and by upgrading or downgrading the resources within each instance. It's like giving your application a turbo boost or dialing it down when the workload changes.

With multidimensional autoscaling, you don't have to adjust resources or add more instances manually. The system takes care of it for you, continuously monitoring metrics like CPU usage, memory, network traffic, or any other custom-defined criteria.

When your application is experiencing high traffic or increased resource demands, multidimensional autoscaling will intelligently add more resources to ensure smooth performance and prevent any slowdowns or crashes. On the other hand, when the workload decreases, it will automatically scale down to optimize resource usage and save costs.

The beauty of multidimensional autoscaling is that it considers multiple factors to make the right decisions. It's like having a super-smart teammate who knows when to boost your application and when to hold back to avoid wasting resources.

By employing multidimensional autoscaling, you can ensure your application stays resilient, responsive, and cost-effective. It's like having a magical elastic system that expands and contracts as needed, effortlessly adapting to the ever-changing demands of your application.

Unfortunately, this feature is exclusive to Google Cloud and unavailable as an open-source project.

Tree solutions exist. The most common autoscaling is definitively horizontal autoscaling. A lot of large companies already use it, and it works well in a lot of situations. Vertical autoscaling is helpful, but limitations restrict a lot of its usage. And multidimensional may be the best, but it requires you to know your application very well when setting limits.

Tests are mandatory to ensure the behavior of the autoscaler is the one expected for your application!

The engineering, product, and developer experience team behind the Qovery platform.

Qovery ensures every agent action is scoped, audited, and policy-checked. Start deploying in under 10 minutes.