Enforcing security baselines across 1,000s of Kubernetes clusters

Key points

- Policy drift is a compounding threat vector: Every manual override, stale infrastructure-as-code template, and one-off namespace exception silently widens the gap between your declared security baseline and your actual fleet state. The gap only becomes visible when an auditor looks.

- Periodic benchmark scans reveal non-compliance after it exists: Running kube-bench quarterly tells you what was wrong at the time of the scan. An agentic control plane prevents non-compliance from persisting by reconciling live cluster state against declared policy continuously.

- Multi-cloud means three different implementations of the same policy: EKS, GKE, and AKS handle admission control, RBAC, and audit logging differently. Without an abstraction layer, the "same policy" across three clouds is actually three separate implementations with their own behavioral gaps.

Agentic Kubernetes compliance enforcement uses an autonomous control plane to continuously enforce, detect drift from, and reconcile security baselines across every cluster in a multi-cloud fleet. It replaces point-in-time audits and per-cluster configuration with fleet-level policy that propagates automatically, making compliance a persistent infrastructure property rather than a recurring verification exercise.

The part teams consistently underestimate is that OPA Gatekeeper, the tool most people reach for first, only enforces policy at the cluster level. It blocks non-compliant resources from being created within a single cluster. Propagating consistent Gatekeeper policies across 300 clusters, and detecting when those policies drift, is a fleet orchestration problem that Gatekeeper was not designed to solve.

The audit finding nobody expected

A SOC 2 auditor flags a finding: dozens of clusters are running a pod security configuration that was supposedly patched three months ago. The investigation reveals that a new cluster was provisioned from a stale infrastructure-as-code template that predated the patch. The cluster passed its initial deployment checks because the checks validated the template, not the live state.

This is not an unusual story. It repeats across organisations operating Kubernetes at fleet scale because Kubernetes security is a continuous state, not a deployment-time property. Admission controllers, RBAC bindings, network policies, audit logging configuration, and secrets management must be enforced across every cluster, continuously, and verified against the intended baseline in real time.

The only approach that works at fleet scale is an agentic control plane that treats policy as a first-class infrastructure primitive, enforcing baselines automatically rather than relying on engineers to propagate changes manually across hundreds of clusters.

The compliance surface grows faster than your team

Every cluster added to the fleet is a new compliance surface. Admission controllers must be configured correctly. RBAC policies must map to the organisation's identity model. Network policies must isolate workloads according to the security baseline. Audit logging must be enabled and forwarding to the correct destination. Secrets must be managed through the approved provider, not stored in ConfigMaps or environment variables.

# A fast audit of secrets stored incorrectly as environment variables

# This is what you find when you finally look across the fleet

$ kubectl get pods --all-namespaces -o json | jq '

.items[] |

select(.spec.containers[].env[]?.value? | test("password|secret|key|token"; "i")) |

{

namespace: .metadata.namespace,

pod: .metadata.name,

container: .spec.containers[].name

}'

# On 10 clusters this takes an afternoon.

# On 500 clusters, nobody runs this query at all.

# That is the compliance gap.

At 10 clusters, a platform team can maintain this manually. At 100 clusters with multiple cloud providers and teams pushing deployments daily, the configuration surface becomes unmanageable. A senior platform engineer can correctly configure a single cluster in an afternoon. That same engineer cannot verify the configuration remains correct across 500 clusters after six months of hotfixes, upgrades, and team changes.

The hidden anatomy of fleet-wide compliance failure

1. The stale template problem

New clusters provisioned from infrastructure-as-code templates inherit whatever baseline existed when the template was last updated. A security patch applied to live clusters in response to a vulnerability may never propagate back to the template repository.

The next cluster provisioned from that template ships with the old configuration. Over time, the fleet silently diverges: clusters provisioned in January carry a different security baseline than clusters provisioned in June, and no single view shows which clusters are running which version.

# Terraform EKS module — the version pinned here may not match

# the security baseline applied manually to live clusters last month

module "eks" {

source = "terraform-aws-modules/eks/aws"

version = "~> 20.0"

cluster_name = var.cluster_name

cluster_version = "1.31"

# If a Pod Security Admission label was added manually to live clusters

# but never committed back here, every new cluster provisioned from this

# module ships without it. The template is the lie.

}

# The fix: enforce security labels as part of namespace provisioning

resource "kubernetes_namespace" "workload" {

metadata {

name = var.namespace

labels = {

"pod-security.kubernetes.io/enforce" = "restricted"

"pod-security.kubernetes.io/audit" = "restricted"

"pod-security.kubernetes.io/warn" = "restricted"

}

}

}2. The namespace exception accumulation

A developer requests an exception to run a container as root for a debugging session. The exception persists after the session ends. Another team copies the configuration for their own namespace. Within months, a pod security standard that was supposed to be enforced fleet-wide has dozens of exceptions that nobody tracks centrally.

# Find namespaces where Pod Security Standards have been overridden

# or exempted across the fleet

$ kubectl get namespaces -o json | jq '

.items[] |

select(

(.metadata.labels["pod-security.kubernetes.io/enforce"] == "privileged") or

(.metadata.labels["pod-security.kubernetes.io/enforce"] == null)

) |

{

namespace: .metadata.name,

enforce: .metadata.labels["pod-security.kubernetes.io/enforce"],

audit: .metadata.labels["pod-security.kubernetes.io/audit"]

}'

# In a compliant fleet, this returns nothing.

# In most fleets, this returns a list that surprises everyone.

The default namespace, which should rarely be used in production, accumulates workloads from teams that bypassed the standard namespace provisioning process. RBAC boundaries exist in policy but not in practice.

3. The multi-cloud consistency gap

EKS, GKE, and AKS each implement admission control, RBAC, and audit logging differently.

EKS uses IAM role mappings through the aws-auth ConfigMap for identity. That ConfigMap is a well-known attack surface: misconfigured entries can grant cluster-admin to unintended principals, and the ConfigMap has no admission controller protecting it by default.

# aws-auth ConfigMap — EKS identity mapping

# A single misconfigured entry here grants cluster-admin to the wrong principal

apiVersion: v1

kind: ConfigMap

metadata:

name: aws-auth

namespace: kube-system

data:

mapRoles: |

- rolearn: arn:aws:iam::123456789012:role/NodeInstanceRole

username: system:node:{{EC2PrivateDNSName}}

groups:

- system:bootstrappers

- system:nodes

# This entry should not be here. It was added during an incident

# and never removed. Nobody noticed because nobody audits this file.

- rolearn: arn:aws:iam::123456789012:role/DevTeamRole

username: dev-team

groups:

- system:masters

GKE uses Google Cloud IAM with Workload Identity. AKS integrates with Microsoft Entra ID. Without an abstraction layer that translates security intent into provider-specific configurations, the "same policy" across three clouds is actually three different implementations of the same intention. Each has its own syntax, defaults, and behavioral edge cases that can create compliance gaps invisible to any single-provider audit.

Why point-in-time audits and native tooling are structurally insufficient

Two misconceptions persist about compliance at scale.

The first is that periodic benchmark scans (running kube-bench or CIS Benchmark assessments quarterly) are sufficient. These tools only reveal non-compliance after it has already existed, sometimes for months. A cluster that passes a scan in January and drifts by February is non-compliant for the entire period between scans.

# kube-bench: CIS Kubernetes Benchmark assessment

$ kube-bench run --targets node --benchmark eks-1.4.0

# This output tells you what is wrong right now.

# It tells you nothing about what drifted since the last scan.

# And it runs on one cluster at a time.

# For a fleet of 200 clusters, you need something like this:

$ for cluster in $(kubectl config get-contexts -o name); do

kubectl config use-context "$cluster"

kube-bench run --targets node --benchmark eks-1.4.0 \

--outputfile "/reports/${cluster}-bench-$(date +%Y%m%d).json" \

--json

done

# This takes hours, produces 200 separate JSON files,

# and still tells you nothing about drift between scans.

The second misconception is that OPA Gatekeeper solves the fleet compliance problem. Gatekeeper is a powerful admission controller at the cluster level. It can block non-compliant resources from being created within a single cluster.

However, maintaining consistent Gatekeeper policies across 1,000 clusters requires a fleet-level orchestration layer that Gatekeeper itself does not provide.

# OPA Gatekeeper ConstraintTemplate — valid at cluster level

apiVersion: templates.gatekeeper.sh/v1

kind: ConstraintTemplate

metadata:

name: k8srequiredlabels

spec:

crd:

spec:

names:

kind: K8sRequiredLabels

targets:

- target: admission.k8s.gatekeeper.sh

rego: |

package k8srequiredlabels

violation[{"msg": msg}] {

provided := {label | input.review.object.metadata.labels[label]}

required := {label | label := input.parameters.labels[_]}

missing := required - provided

count(missing) > 0

msg := sprintf("missing required labels: %v", [missing])

}

# This policy enforces labels on this cluster.

# Deploying and keeping this policy consistent across 500 clusters

# is the problem Gatekeeper does not solve.

# That is a fleet orchestration problem.

Policy authoring, version management, propagation, and drift detection across the fleet are operational problems that exist above the cluster level. Visibility into non-compliance is not the same as enforcement of compliance.

Qovery as the agentic compliance enforcement layer

Qovery functions as the control plane above EKS, GKE, and AKS that operationalises security intent at fleet scale. Platform teams author policies at the organisation level: pod security standards, RBAC configurations, resource limits, and deployment rules. The platform translates these into provider-specific configurations and propagates them to every cluster in the fleet.

The translation layer is what makes the multi-cloud consistency problem tractable. Instead of maintaining three implementations of the same policy, platform engineers author intent once. Qovery generates the correct EKS IAM mapping, GKE Workload Identity binding, or AKS Entra ID integration from the same source of truth.

Continuous drift detection identifies clusters whose live state has diverged from declared policy, whether through manual overrides, failed rollouts, or stale templates. The enforcement is owner-aware: violations are routed to the responsible team with specific remediation context before automated action is taken. Engineers see what drifted and why before the platform reconciles the state.

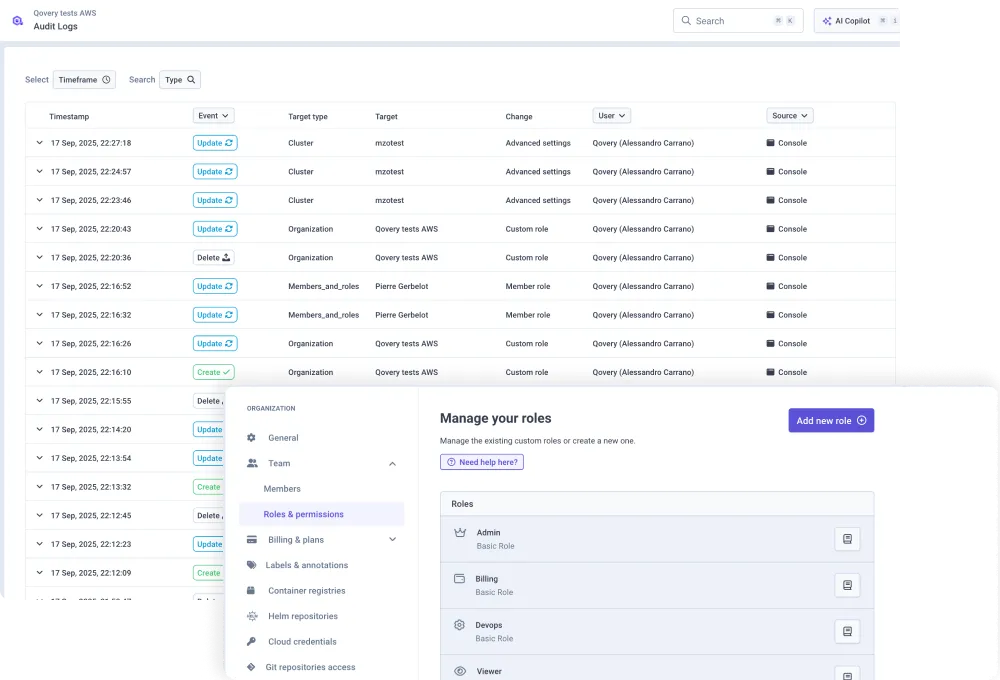

Every policy change, drift detection event, and reconciliation action is logged with identity, timestamp, and target cluster. That audit trail is a byproduct of normal operations, not something assembled the week before a review cycle. Qovery's security architecture extends these guarantees to the platform itself.

From policy intent to fleet-wide enforcement

Consider propagating a new Pod Security Standard across 300 clusters. Without an agentic control plane, the platform team writes a policy, tests it on staging, then applies it to each cluster individually or through a CI/CD pipeline that targets each cluster's API server sequentially.

At best, this takes days of engineering time. At worst, the rollout stalls partway through, leaving the fleet in a split state where only some clusters enforce the new standard.

# What manual fleet-wide policy rollout looks like

# This is the approach that stalls at cluster 47 when something breaks

CLUSTERS=($(kubectl config get-contexts -o name))

POLICY_MANIFEST="pod-security-standard.yaml"

FAILED=()

for cluster in "${CLUSTERS[@]}"; do

kubectl config use-context "$cluster"

if ! kubectl apply -f "$POLICY_MANIFEST"; then

echo "Failed on $cluster"

FAILED+=("$cluster")

fi

sleep 2 # Rate limiting to avoid API server overload

done

echo "Failed clusters: ${FAILED[@]}"

# Now you have 300 clusters in an unknown split state.

# Some have the new policy. Some do not.

# You do not have a reliable rollback path.

With an agentic control plane, the platform team defines the policy once. The platform validates it against a canary set of clusters, then propagates it fleet-wide with rollback capability if validation fails. The process completes in hours rather than days, and the platform confirms which clusters are compliant and which require attention, without anyone writing a bash loop to find out.

Compliance as a default, not a quarterly sprint

The goal is infrastructure that is provably compliant at all times. With this architecture, it is not possible to provision a non-compliant cluster because every new cluster inherits the organisation's security baseline automatically. There is no stale template problem because the baseline is applied by the platform, not baked into a Terraform module that someone forgot to update.

Organisations that reach this state stop treating compliance as a quarterly sprint where engineers assemble evidence, remediate findings, and hope nothing drifts before the next audit. Compliance evidence is generated continuously. Audit trails exist as a first-class output. Security baselines are enforced by the platform rather than by human discipline.

For teams currently evaluating how to structure this, the Kubernetes best practices for production guide covers the specific RBAC, network policy, and secrets management configurations that form the technical baseline worth enforcing fleet-wide.

🚀 Real-world proof

Syment needed to eliminate the infrastructure overhead consuming their engineering team and establish consistent governance across their deployment pipeline without dedicated DevOps headcount.

⭐ The result: Infrastructure maintenance dropped to under two hours per month, the team saved €120K in DevOps costs, and deployments went from manual processes to three-click deploys with built-in compliance guardrails. Read the Syment case study.

Conclusion

Kubernetes compliance at fleet scale is an enforcement problem, not an auditing one. Quarterly benchmark scans tell you what was wrong at a specific point in time. They do not prevent drift, catch stale templates, or surface namespace exceptions that accumulated over six months. An agentic control plane does.

The operational difference is measurable. Teams that manage compliance through continuous enforcement rather than periodic remediation spend less time assembling audit evidence and more time on work that improves the platform. The audit trail is generated automatically. The baselines are enforced by the system rather than the team. The quarterly sprint becomes a non-event.

FAQs

How can platform teams enforce Kubernetes security baselines at scale without manual YAML management?

An agentic control plane allows platform teams to author security policies at the fleet level and propagate them automatically to every cluster, regardless of cloud provider. The platform translates organisational intent into provider-specific configurations for EKS, GKE, and AKS, eliminating the need to maintain separate YAML files per cluster. Policy updates propagate fleet-wide in hours. The critical detail is that the platform also detects drift continuously, meaning a policy applied today does not silently erode by next quarter.

What is policy drift in Kubernetes, and why does it compound at enterprise scale?

Policy drift occurs when the live state of a cluster diverges from its declared security baseline. At enterprise scale, drift compounds because each cluster can drift independently: a manual override on one cluster, a stale template on another, a namespace exception copied across a third. Without continuous drift detection, the fleet develops an inconsistent security posture that only becomes visible during audits. At that point, the remediation effort is proportional to the number of clusters and the duration of the drift, neither of which is small.

How does agentic compliance enforcement differ from benchmark scanning tools like kube-bench?

Benchmark scanning tools like kube-bench assess cluster configuration at a specific point in time. They are retrospective: they reveal non-compliance after it has occurred. Agentic enforcement is continuous and preventative. It blocks non-compliant configurations from being applied, detects drift from declared baselines in real time, and reconciles fleet state automatically. The distinction matters operationally: scanning produces a remediation backlog. Agentic enforcement produces an audit trail showing the issue was caught and resolved before it became a finding.

Suggested articles

.webp)

.svg)

.svg)

.svg)