Cluster Autoscaler is a tool that automatically adjusts the size of the Kubernetes cluster when one of the following conditions is true:

Kubernetes Cluster Autoscaler vs Karpenter

One of the most exciting things when using Kubernetes is the ability to scale up and down the number of nodes based on application consumption. So you don’t have to manually add and remove nodes on demand and let it go on usage. Obviously what you want is to keep control on the minimum and the maximum number of nodes to avoid an unexpected bill.

- some pods failed to run in the cluster due to insufficient resources.

- there are nodes in the cluster that have been underutilized for an extended period of time, and their pods can be placed on other existing nodes.

Depending on the cloud provider, it’s built-in or not (eg. GCP provides it by default, and AWS not).

The Kubernetes autoscaling SIG has done excellent work since then, but having also worked with Mesos, I was waiting for an initiative to optimize pod placement and reduce the billing cost to the maximum. This is where Karpenter entered the game, launched by AWS.

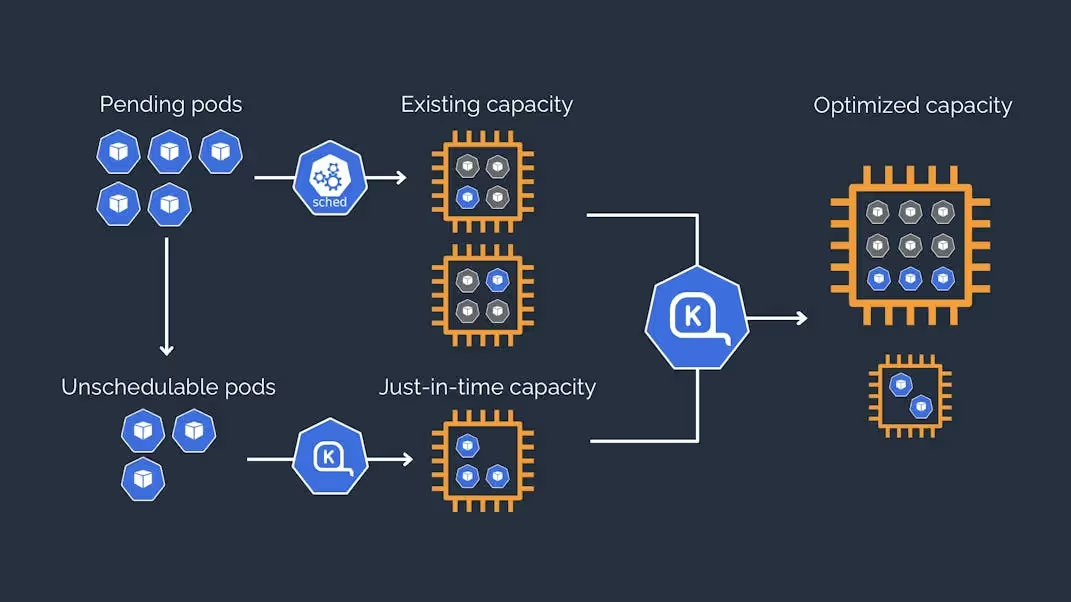

Welcome Karpenter

Karpenter automatically launches just the right compute resources to handle your cluster's applications. It is designed to let you take full advantage of the cloud with fast and simple compute provisioning for Kubernetes clusters.

Karpenter vs Cluster Autoscaler

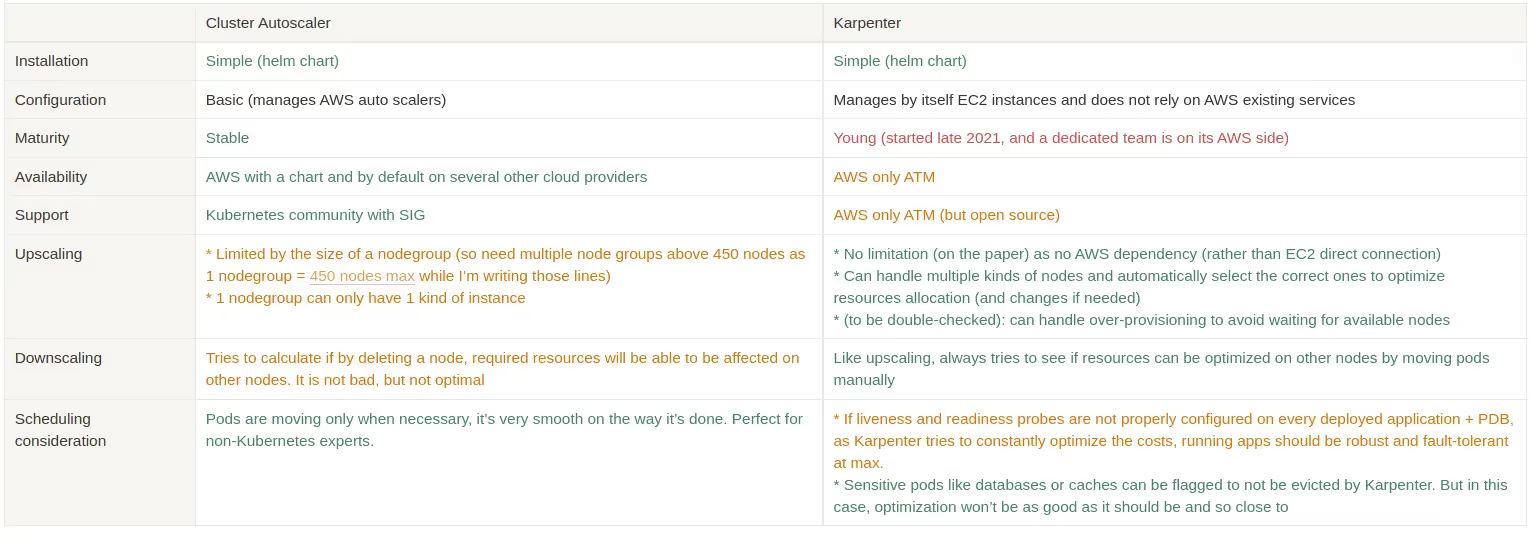

Karpenter can look like a simple alternative to Cluster autoscaler, but it’s more than that. Both are really good, but before choosing one, you have to know their strengths and weaknesses. Karpenter is more useful when you start to have a significant workload, while cluster autoscaler is not the best in this case.

I’ve tried to summarize both solutions:

Conclusion

Both Cluster Autoscaler and Karpenter are interesting solutions. Cluster autoscaler is more stable, and more used, while Karpenter is the outsider. If you want to use Karpenter, you have to be sure the workload is adapted to it, otherwise, Karpenter won’t be able to do its job properly and you won’t get its benefits.

At Qovery we’re considering Karpenter as an alternative for a cost-effective solution. Nowadays, we strongly think Cluster Autoscaler may be more adapted to production usage (because of the common usage where application cache is useful), and Karpenter for pre-prod/staging/testing usage. We also hope other cloud providers will invest in Karpenter and see it becoming the new standard.

The engineering, product, and developer experience team behind the Qovery platform.

Agents ship fast. Guardrails keep them safe.

Qovery ensures every agent action is scoped, audited, and policy-checked. Start deploying in under 10 minutes.