10 Tips To Reduce Your Deployment Time with Qovery

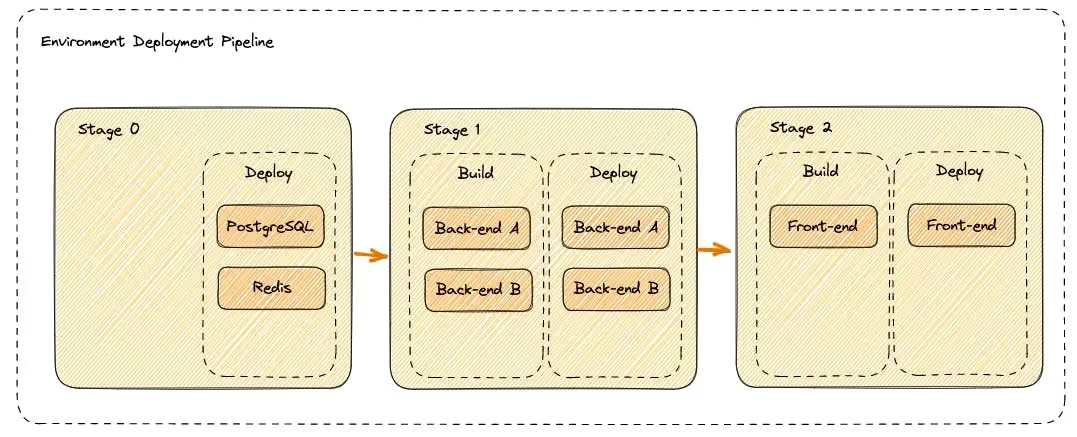

Overview of the Deployment Pipeline

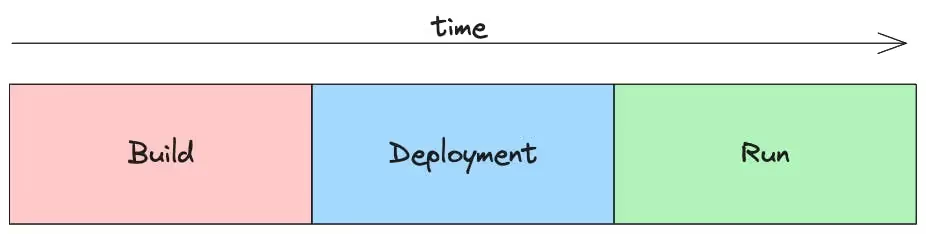

Understanding the deployment pipeline is the first step toward optimization.

The pipeline usually involves:

- Build: Compiling code and bundling dependencies into an executable package.

- Deployment: Transferring the package to the server and getting it ready for use.

- Run: The application is live and functional after the deployment is complete.

Tip: It's worth noting that the "Build" phase often consumes the most time in the entire deployment process. Being aware of this can help you prioritize your optimization efforts effectively.

Take a look here to dig deeper into how Qovery Deployment Pipeline works.

Diagnosing Deployment Time Drags

Before diving into optimizations, it's important to identify bottlenecks. The qovery environment deployment explain command can help you understand where your deployment spends most of its time, giving you actionable insights.

First, by listing your deployments

And then by getting the details of the deployment of our choice

In my context, the most expensive operation (almost 5 minutes..) is the Deploy from my Terraform RDS service inside my 1st stage Databases. In this example, I can't optimize the Terraform RDS since it takes time for AWS to spin up the resource. So independent from my end. However, I see a bunch of Build and Deploy steps that could be optimized.

If you remove the --level argument, you can see the details of each step. Which is verbose but perfect when you know what step you want to optimize first.

Here are the most important elements to improve your deployment time performance.

Recommendations

Build Phase: Reducing Build Time

Optimize Your Dockerfile

Optimizing your Dockerfile is like fine-tuning an engine for maximum performance. Start by using a lightweight base image — think of Alpine for Node.js applications. For instance:

Multi-stage builds can further slim down your final image by keeping only what's necessary for runtime.

Be strategic with layer caching. Cache your project’s dependencies, which seldom change, before the application code, ensuring faster builds.

Read more about Dockerfile optimization.

Use a Pre-built Container Image

The traditional way of deploying applications often includes building the entire stack from scratch. While this gives you control, it's often time-consuming. Utilizing a pre-built image can dramatically speed up your build time because the foundation is already laid out. Here's how.

Traditional Node.js Dockerfile:

Using a Pre-built Container Image:

Assume you have a pre-built image prebuilt-node-image that already contains your node_modules and other dependencies.

The second approach eliminates the need to download and install Node packages each time, saving you precious minutes or even hours, depending on your application's complexity.

Utilize Build Tools Like Depot

Think of your build process as a production line. The efficiency of this line determines how fast your product — your application — gets to market. While Qovery provides highly optimized builders with 4 vCPUs and 8GB of RAM for each build and supports up to four builders running in parallel, there could be scenarios where you might need additional speed. This is where tools like Depot come in handy.

Depot caches your build artifacts, ensuring that repeated builds get faster over time. It's like adding another layer of efficiency to an already optimized assembly line. So, while Qovery's builders are robust and capable, you have the option to employ Depot for that extra dash of speed.

Frontend Optimization with Nx

When it comes to speeding up frontend builds, Nx is like your turbocharger. By using computation caching and parallel task execution, Nx significantly reduces build times, especially in monorepo settings where you have multiple frontend applications.

Read more about why the Qovery Frontend team uses Nx

Mono Repository Optimization

In a sprawling monorepo, it's inefficient to rebuild and redeploy every app for every minor change. Use Qovery deployment restrictions to act like a smart sensor, triggering builds only for the apps that have changed, thereby preventing unnecessary builds.

Deployment Phase: Quicker Deployments

Adequate CPU and RAM Allocation

Choosing the right amount of CPU and RAM for your app is like setting the correct tire pressure on a race car. Too low and you compromise speed; too high and you risk a blowout. Adjusting these settings appropriately ensures that your applications start faster and run smoother.

Avoid Building at Runtime for SSR Apps

Building at runtime, especially for Server-Side Rendering (SSR) apps, is like assembling furniture when you're trying to move into a new apartment — it's not the time nor the place. This approach increases latency and strains system resources. Always opt to pre-build your SSR applications to achieve faster and more stable deployments.

Aggressive Deployment Strategies like "Recreate"

Choosing the "Recreate" deployment strategy is akin to using a sledgehammer when you're in a rush; it's forceful but effective. This strategy stops the old application instances and immediately spins up the new ones, cutting the waiting time dramatically. However, use it cautiously; it's ideal for test environments but not recommended for production.

Runtime Phase: Minimizing Startup Time

Efficient Health Checks

Configuring efficient health checks is like having a well-trained medical team at a sports event. They quickly assess the situation and make informed decisions, allowing for smooth and fast transitions. Proper health checks enable quicker deployments and offer better resilience against failures.

To learn more about Health Checks and Probes - read this article .

Continual Monitoring

Finally, never underestimate the power of constant vigilance. Monitoring your application is like having a seasoned coach who can spot even the tiniest inefficiencies and recommend immediate improvements. Consistent monitoring of system performance allows you to proactively optimize your settings, ensuring that future deployments are faster and more efficient.

Conclusion

Reducing deployment time is an ongoing process that involves both platform and user-side optimizations. While Qovery automates many tasks to make deployments faster, the strategies outlined above will help you make your pipeline even more efficient. So go ahead, implement these practices and shave off those valuable seconds or even minutes from your deployment time.

Happy Deploying!

Suggested articles

.webp)

.svg)

.svg)

.svg)