How to Manage the High Cost of Scaling on Heroku

Let’s begin with the challenges of scaling on Heroku.

The Challenges of Scaling on Heroku

Scaling applications on Heroku can come with several challenges that can be difficult for businesses to manage. Let’s explore two of the most common challenges: cost and performance.

A. Cost Challenges

The foundation of Heroku's pricing strategy is based on dynos, which are basically individual containers that each run a single instance of an application. Heroku provides a range of dynos, some suited for hobbyists and development environments while others for managing production workloads.

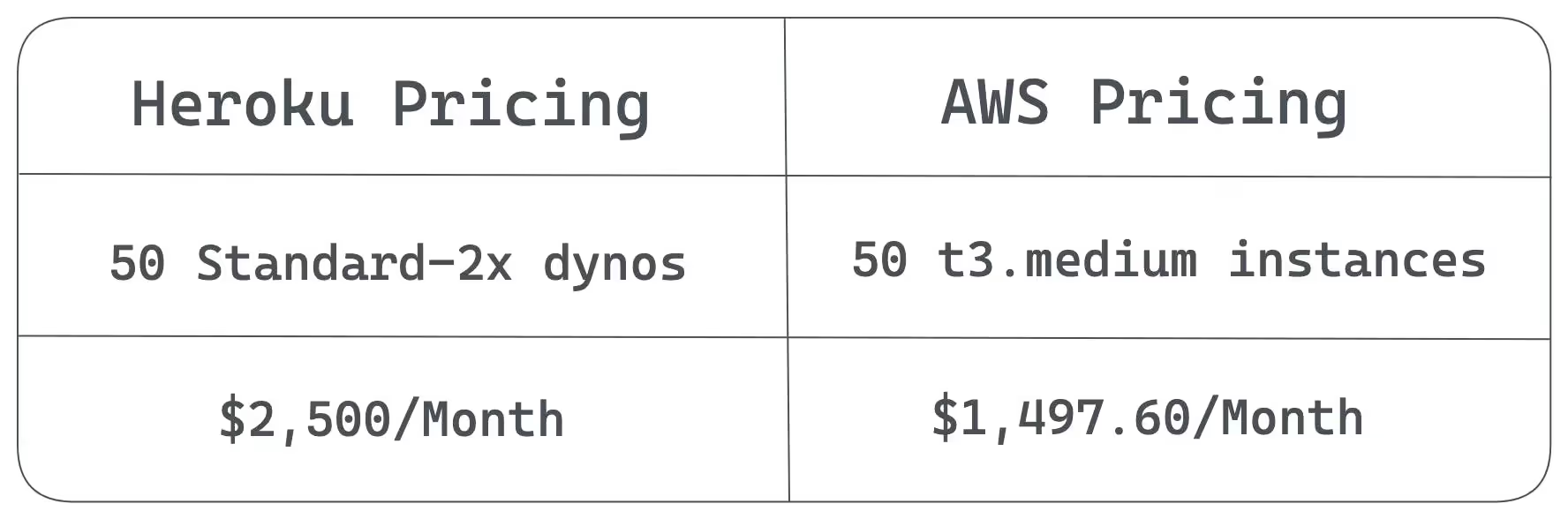

An enterprise application that wants to scale up uses more resources and dynos to maintain performance, which increases the overall infrastructure cost. Consider a large-scale application that expects to handle 1000 concurrent users. To meet this demand, it uses 50 Standard-2x dynos on Heroku. Paying for these dynos monthly would cost $2,500 ($50 x 50). The price would increase if the application required significantly more resources and switched to Performance-L dynos which are costlier than the standard dynos.

The above cost does not include the cost for Heroku add-ons, like databases, logging, caching, or any external services. Note that Heroku is built on top of the AWS infrastructure, adding its own cost of managing underlying AWS resources on top of standard AWS cost.

If we compare this cost with AWS, for example:

The cost of a t3.medium instance in the US East (N. Virginia) region is $0.0416 per hour.

For 50 t3.medium instances, the cost would be:

$0.0416 per hour * 50 instances = $2.08 per hour

For a month with 30 days, the total cost would be:

$2.08 per hour * 24 hours * 30 days = $1,497.60

So you can see the difference in cost is huge.

B. Performance challenges

Cost is one of many worries when scaling your applications on Heroku. You also experience various performance issues, including slow response time, congested database, application not responding, increased latency, and the need for additional resources. You may not experience these issues for a small application, but as you add more dynos to your infrastructure, these issues start to creep in. Here is a summary of possible performance issues:

Dyno limitations: Heroku is a Platform as a Service (PaaS) provider that operates on a dyno-based architecture. Dynos are lightweight containers that run the application code, and each dyno has a limited amount of CPU, memory, and disk space. If the number of concurrent users using your application increase, you may need to add more dynos to handle the load. However, the limitations of the dyno-based architecture, like cold start time, resource contention, and deployment complexity, can severely impact your application performance.

No automatic upscaling of resources: Consider a scenario where you expect a huge traffic load on your system on a particular occasion. There is no way that you can ask Heroku to automatically upgrade your dynos when the load increases and revert back to the original dynos when the load is back to normal. You will need to do it manually. Kubernetes does have a vertical pod autoscaler, but Heroku does not support any managed service for Kubernetes. You will need to manage it yourself. Heroku supports horizontal scaling, but that makes your architecture complex. The feature of Heroku autoscaling is a paid feature and is only available to performance and private plans.

Database limitations: Heroku's managed database services can also pose performance issues when scaling. Heroku's managed databases have limits on the number of incoming connections that can be availed at any given time. If your application exceeds these limits, you will see a slow response time and declining performance because your application is waiting for the database to provide database connections. These databases have resource limits, and as the volume of data and traffic increases, you may need to add more resources to handle the load. But adding more resources can also result in increased contention for CPU and memory resources, which can affect performance.

Alternative Solutions for Scaling Applications

Qovery is a Kubernetes management platform that simplifies the deployment of applications to cloud infrastructure. It allows developers to easily deploy their applications on AWS and other cloud service providers.

Qovery helps growing businesses and enterprises manage increasing costs and complexity when scaling applications for an increased workload. Qovery benefits businesses in many ways. Let’s go through them one by one.

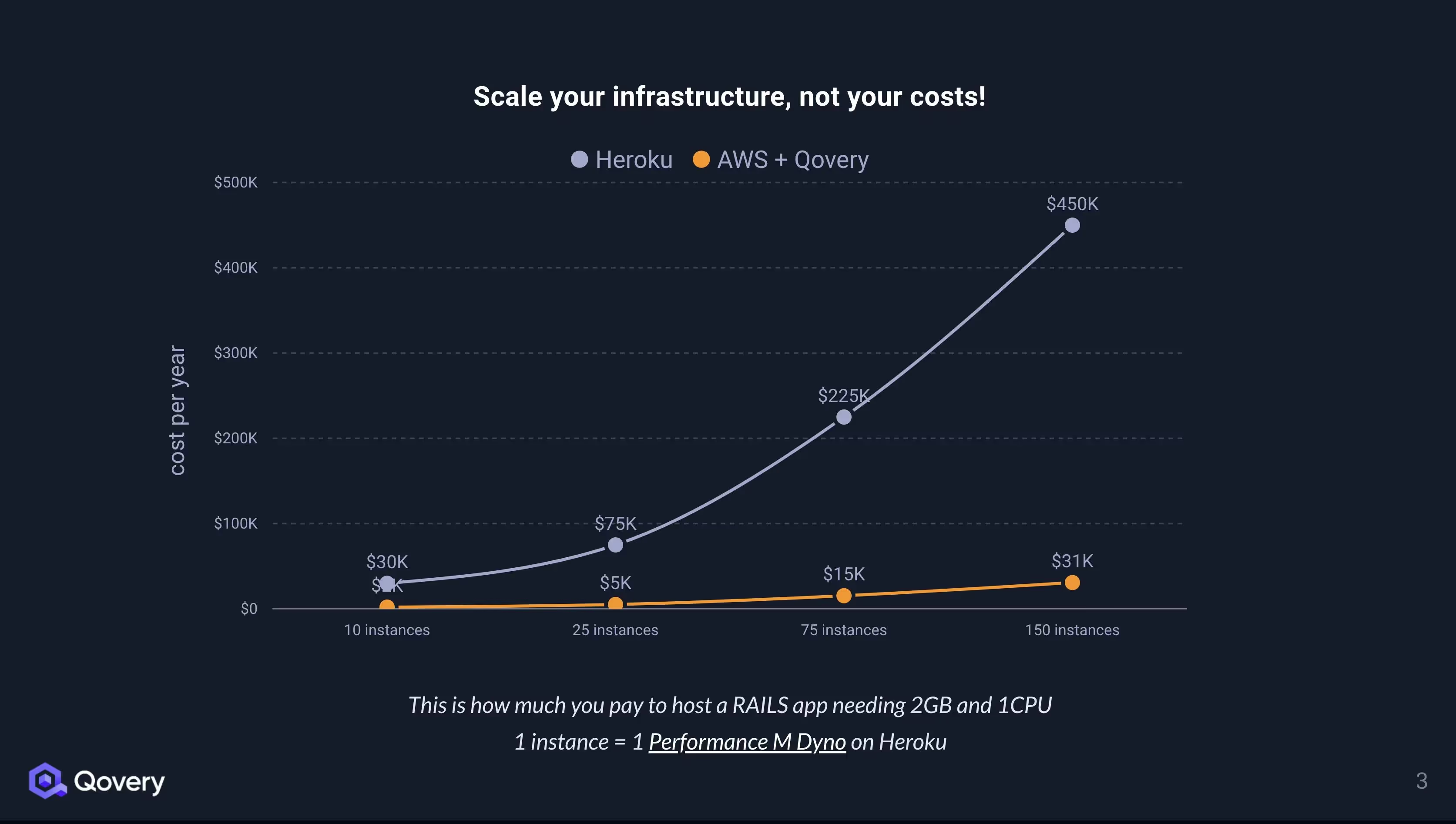

Qovery's pricing model is not based on dynos or add-ons but has a fixed monthly price. Since Qovery operates on your own cloud infrastructure, you can take advantage of the lower pricing cloud providers offer (as seen above with the AWS price comparison), resulting in greater cost optimization flexibility.

Qovery provides cost optimization features that help you save your bill up to 80% compared to other PaaS solutions like Heroku.

Combined with AWS, Qovery offers more cost-effective scaling options that won't break the bank.

See more details in this article: Five Ways to Decrease Your Infrastructure Costs with Qovery.

Qovery dynamically provisions new resources and scales up and down based on the workload, enabling organizations to scale their applications easily. The ability to automate the dynamic provisioning of resources with no downtime improves application release workflow and gives organizations a market advantage.

Qovery facilitates cloud installation for businesses. Utilizing self-service portals, developers can rapidly launch their applications leveraging the platform's intuitive user interface.

Qovery enables companies to select their cloud provider and infrastructure, giving them greater control over their applications. Infrastructure can be customized and optimized to fit the needs of businesses.

Case Studies

Qovery helped hundreds of companies scale their products without incurring heavy costs. Below is an example case study of Papershift, where Qovery helped them make a smooth transition from Heroku and significantly reduced the company's scaling costs. Some quick highlights of this journey are the following:

Frictionless 'Heroku to AWS' Migration

Papershift, a worker management service, was growing rapidly. As they scaled, Heroku's price model and limited customization options were their pain points. Papershift moved from Heroku to Qovery because it was cheaper. Qovery team helped Papershift to achieve a smooth transition of data and applications.

Flexible Infrastructure

Qovery lets Papershift choose its cloud provider and infrastructure. Papershift minimized expenses and optimized resource use by customizing its infrastructure. Heroku's restricted infrastructure and customization choices prevented this flexibility.

Flexible and Predictable Costs

With Qovery's fixed monthly pricing, businesses can plan and budget their costs more accurately without worrying about unexpected hidden charges. The ability to select the right cloud provider and desired infrastructure of your choice also results in cost savings because you can opt for the most cost-efficient cloud provider and optimal infrastructure per your needs.

Efficient Scaling

Papershift's apps scaled faster and more efficiently with Qovery's automatic scaling. Papershift avoided manual intervention and downtime by provisioning new resources to meet growing application consumption. This was better than Heroku, where human intervention in scaling caused delays and errors.

Simplified Deployment

With its powerful and user-friendly platform, Qovery made Papershift's cloud deployment easier. Papershif used Qovery to simplify the process of deploying and managing applications. With Qovery, Papershift can quickly and easily deploy its applications on AWS without worrying about infrastructure setup, configuration, or maintenance. Qovery automates the entire process, from code to production, so the Papershift team can focus on what really matters: building great applications.

Qovery has made a big difference for many businesses by helping them save costs, improve their application workflow, and easily scale their apps. Its transparent monthly pricing and the option to pick your favorite cloud provider and setup help companies use resources better and spend less. On top of that, Qovery's automatic scaling and easy-to-use deployment mean quicker, smoother application releases. Qovery's range of features makes it a great choice instead of platforms like Heroku, especially for businesses wanting to get the most out of their infrastructure and cut costs as they scale their apps.

Conclusion

Scaling applications is crucial for businesses to meet the growing demands of their customers and stay competitive. While Heroku is a popular platform for scaling, it can become prohibitively expensive for companies with large-scale applications, and performance issues can arise.

Qovery offers an alternative solution to help businesses manage the cost and complexity of scaling applications. Qovery's simplicity, automation features, and ability to scale with minimal downtime make it an attractive choice for companies looking to optimize their scaling efforts.

Through case studies, we have seen how Qovery has helped companies save money, improve performance, and scale their applications more efficiently. With Qovery, businesses can focus on their core competencies while leaving the management of their scaling infrastructure to the experts.

If you're struggling with the high cost and complexity of scaling on Heroku, give Qovery a try. Sign up for a free account today and see how Qovery can help you scale your applications more efficiently!

Suggested articles

.webp)

.svg)

.svg)

.svg)