Why You Should Use Docker Over Buildpacks

Common use cases using Buildpacks

Over time, we have seen a wide variety of users and projects using Buildpacks to run their stack on Qovery.

The most common ones are:

- Users coming from Heroku: those users come with their existing stack deployed on Heroku; using Buildpacks is just the easiest way to make it works almost everywhere else.

- Users have a very "common” stack whiling to move fast on their product without having to bother dealing with how containers are built.

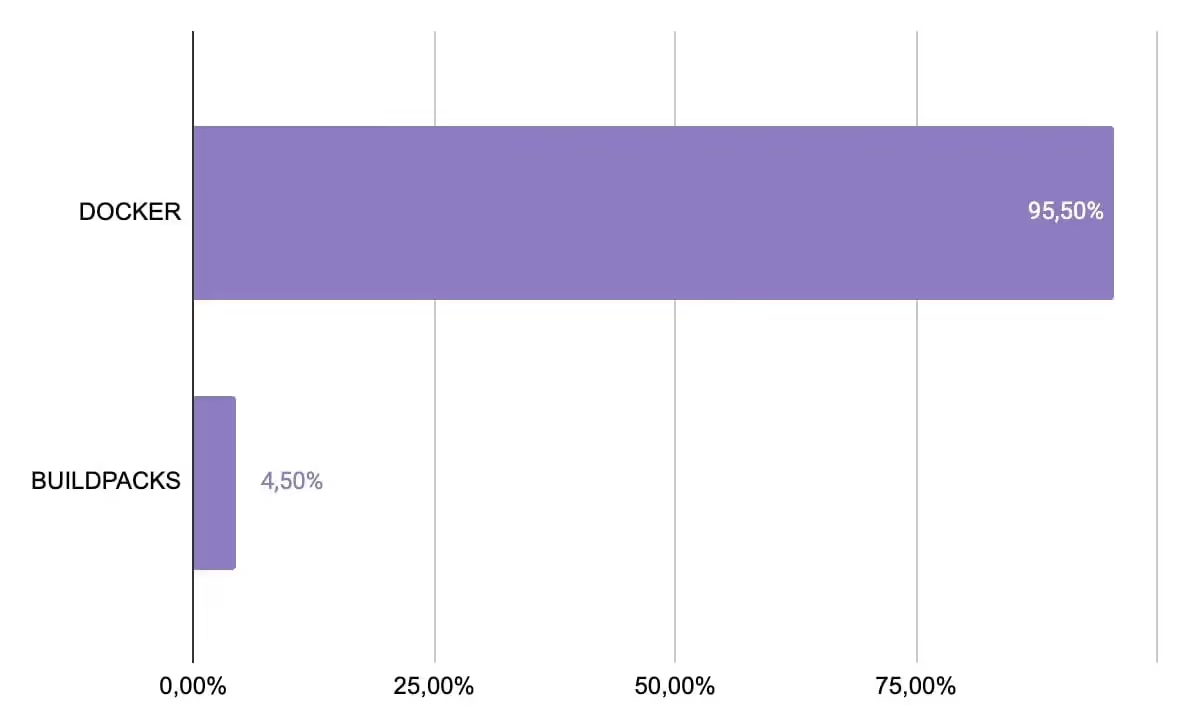

Buildpacks usage at Qovery

What are Buildpacks users looking for?

Buildpacks users are seeking:

- Easy setup: configuration is as quick as the blink of an eye, especially if you are using a common stack and framework (looking at your NodeJS).

- Simplified dependency management: allows developers to manage dependencies in their applications easily. They automatically detect, download, and install the required dependencies, such as libraries, frameworks, and runtime environments, during the build process. This helps developers avoid manual configuration and ensures that applications have the necessary dependencies to run reliably and securely.

- Portability and consistency: provides a consistent way to package applications and their dependencies into containers, making them highly portable across different platforms and environments. Buildpacks abstract away the underlying infrastructure, allowing developers to build containerized applications once and run them anywhere, whether it's in a local development environment, in a staging environment, or in production. This helps ensure consistency and reproducibility of the build process, reducing the risk of deployment issues caused by platform differences.

Main issues our users faced over the last months with Buildpacks

But using Buildpacks also comes at a cost:

- Limited Flexibility: provides a predefined set of buildpacks for popular programming languages and frameworks, but they may not cover all possible use cases. If you have unique requirements or dependencies, you may need to create your own custom buildpack, which can be more complex and time-consuming.

- Limited customization: designed to be opinionated and may not provide the level of customization required by some applications. This can be a limitation for applications with complex build requirements or unique configurations that are not supported by the available buildpacks.

- Vendor lock-in: while designed to be portable across different containerization platforms, there is a risk of vendor lock-in if relying heavily on platform-specific buildpacks. Switching to a different containerization platform may require rewriting or modifying the buildpacks, which can be time-consuming and may impact portability.

- Dependency management: automatically manages dependencies, which can be convenient, but it may also lead to challenges in managing and tracking dependencies, especially in large or complex applications. This may require additional effort to ensure proper dependency management and version control.

- Lack of fine-grained control: abstract away some of the details of the container image-building process, which means that developers may have limited control over the resulting image. For example, if you need to optimize the size or performance of the container image, you may need to use other tools or techniques outside of Buildpacks.

Here's a non-exhaustive list of all issues our users faced:

- Failed to launch container (something change on Buildpack's side)

- Buildpacks not running the command user expects

- Buildpacks limitation: user needs specific PHP extension

- Buildpacks removing compatibility with builder version

- Buildpacks timeout

- Setup proper file permissions limitation

The main root causes are either customization limitations, the user requiring advanced setup Buildpacks cannot provide easily, or the Buildpacks image being updated and is either buggy or dropping some compatibility.

Build your apps with confidence: use Docker

At Qovery, we observe that our more mature users tend to use Docker.

While Buildpacks seems to be the go-to choice to spin a very common stack from the ground up without much effort, it will be limited at some point.

Docker, on the other end, is a bit more complicated to start with (not really, check this guide to write the first Dockerfile for your application 😊) but as there are a lot of examples everywhere, finding a simple example to start with on your stack should be pretty easy and will allow future fine-grained customizations and optimization (being image size, performance, etc.).

Take it easy; use Docker.

Bonus

Buildpacks hasn't the same level of support/integration in our product, making it less feature complete to run on Qovery's stack.

Using Buildpacks on Qovery's Kubernetes management platform, you are missing the following features:

- Parallel deployment (aiming to speed up build & deployment): parallel builds within the same stage are not supported

- ARM architecture

- Scaleway: our current integration has shown some instability between Buildpacks and Scaleway registries (we are working on it but have no clear insight yet).

Suggested articles

.webp)

.svg)

.svg)

.svg)