7 Common Kubernetes Pitfalls in 2023

Kubernetes is the industry's most popular open-source platform for container orchestration. It helps you automate many tasks related to container management. Companies use it to solve their problems related to deployment, scalability, testing, management, etc. However, Kubernetes is complex and requires a steep learning curve. In this article, we will go through some common Kubernetes pitfalls most companies fall to. These are the issues faced by many companies embracing Kubernetes to scale their business. While discussing the problems, we will also highlight how to avoid or fix them. Ultimately, we will discuss the best solution for getting the most out of Kubernetes without facing its complexities.

Morgan Perry

September 3, 2022 · 5 min read

[Last updated on 07/26/2023]

Let's start with the first error related to the incorrect use of labels and selectors.

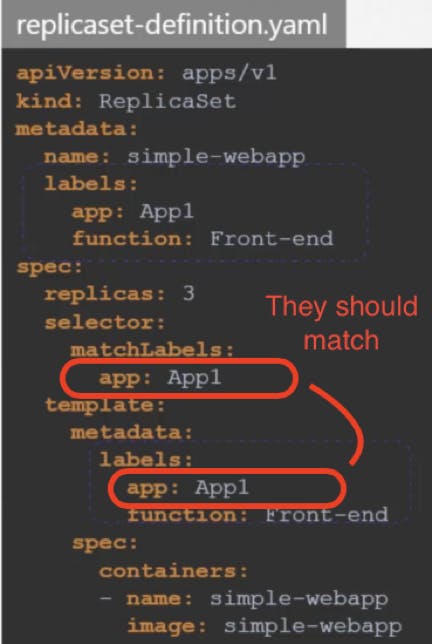

#1. Incorrect Labels and Selectors

One of the frequent mistakes beginners make is the incorrect use of labels and selectors in the configuration. Labels are key/value pairs associated with objects like pods, services, etc. Selectors allow you to identify objects you have tagged with your labels. A non-matching selector puts the deployment resource into an unsupported state, and you might see an error related to an incorrect label and selector. The below example illustrates this concept. Note that labels are case-sensitive. Make sure you use correct labels and selectors in your YAML files and carefully check for typos.

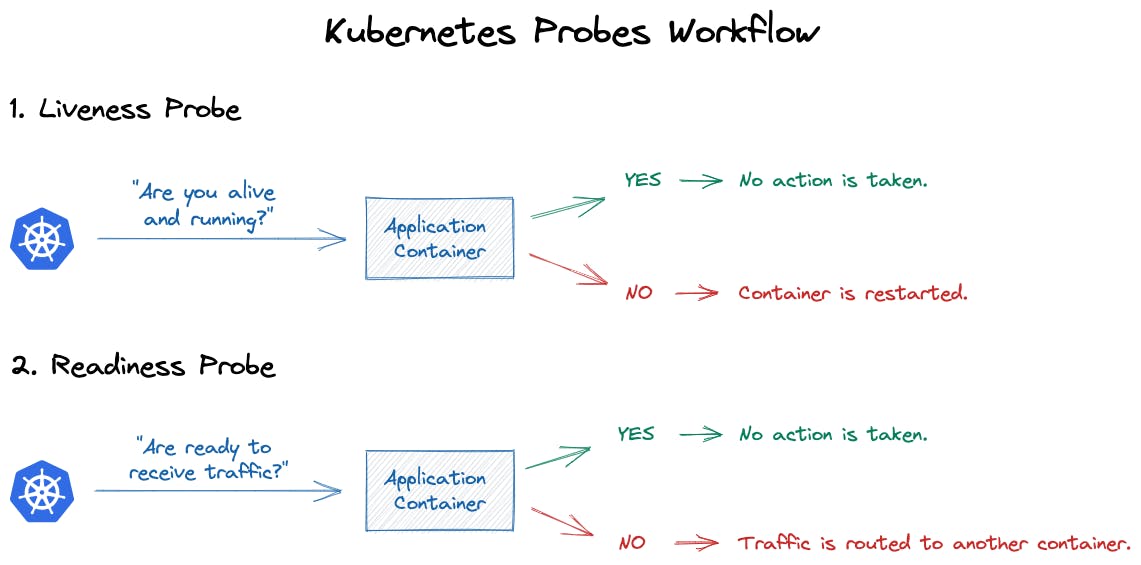

#2. Ignoring Health Checks

When deploying your services to Kubernetes, health checks play a crucial role in maintaining your services. Health checks are highly under-utilized in the Kubernetes environment. Through health checks, you keep an eye on the health of the pods and their containers. Kubernetes has three main tools you can use for health checks. The Startup probe confirms whether the pods were initiated and created without issues. The Liveliness probe tells you if your application is alive or not. The Readiness probe ensures whether your application can receive traffic successfully or not.

To learn more about Kubernetes Probes, you can read this documentation.

#3. Using the Default Namespace for all Objects

Namespaces allow you to group different resources, such as deployments and services. Namespaces are essential when multiple teams work on the same product or a microservices-based application. In the development environment, using the default namespace might not be an issue, but it can cause production problems if you execute the command without mentioning the namespace. Remember, if you do not mention any namespace, you will not see an error, but service or deployment will be applied to the default namespace instead of your desired namespace. See the example below.

Instead of:

kubectl apply -f deployment.yamlRun:

kubectl apply -f deployment.yaml --namespace production-api#4. Using the 'Latest' Tag

Many users think the tag 'Latest' always points to the latest pushed version of an image, but that is not the case. The “latest” tag doesn’t always deploy the version you think is the most recent one. Using the “latest” command for deployment, you will not be able to roll back to an earlier version.

Using explicit version tags will ensure you always deploy the correct version. This also allows your teams to control rollback using tags for previous known versions.

#5. Lack of Monitoring and Logging

One of the pitfalls while setting up Kubernetes is ignoring the proper monitoring and logging. You should set up a log aggregation server and monitoring system to keep an eye on your application. That will help you not only see various bottlenecks in your system but also how to measure and optimize the performance of your Kubernetes clusters. A sound monitoring system includes alerts and notifications for various resource metrics. As mentioned previously, Kubernetes is complex, so you need proper monitoring and logging to troubleshoot and resolve different issues.

Adopting a sound monitoring system is essential for the smooth functioning and proactive management of your Kubernetes system. As the native monitoring tools lack many useful features like log aggregation, track audit events, and alert notifications, so it is better to use a third-party tool for logging and monitoring. Check out our article on the 17 Best DevOps Tools to Use in 2022 for Infrastructure Automation and Monitoring.

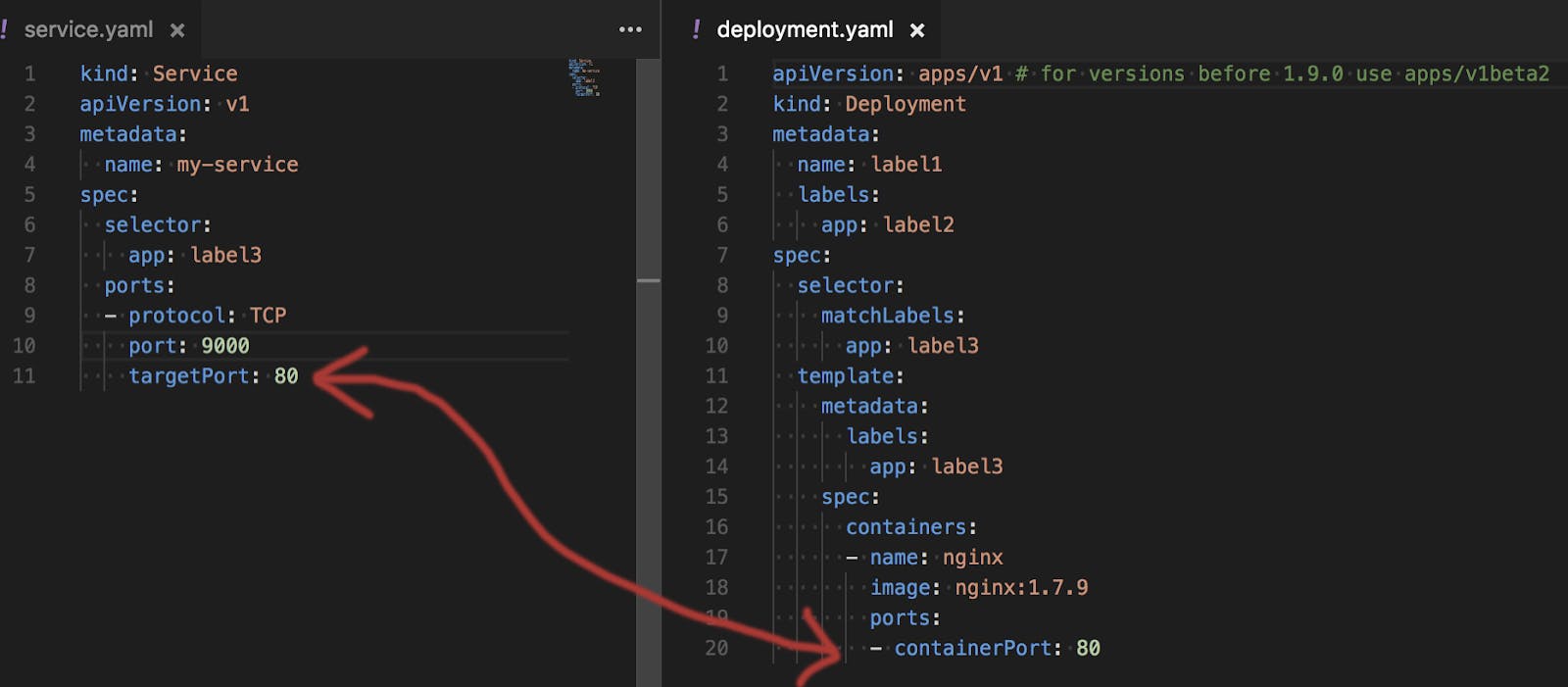

#6. Wrong Container Port Mapped to a Service

If you are facing the error “connection refused” or no reply from containers, then it might be an issue of an incorrect container port mapped to the service. This is because the two parameters in the service are similar to each other. One is “Targetport” while the other is “port”. It is very easy to mix their usage and face the issue.

Note that the “targetPort” of your service is the destination port in the pods, the one to which a service goes to forward traffic. This is illustrated in the image below. Whereas the “port” parameter refers to the port the service exposes to the clients.

#7. Crashloopbackoff error

Another frequent error in Kubernetes is the crashloopbackoff error. It occurs when a pod is running, but one of its containers keeps restarting due to termination. So the container keeps falling into the loop of Start-crash-start-crash.

There can be many reasons for this error. It can be a simple typo in the configuration file, lack of memory, incorrect configuration, etc. You need to check the pod description and pod logs to troubleshoot and fix the root cause.

#Wrapping Up

This article discussed some of the most frequent pitfalls of Kubernetes. We also briefly discussed various preventive and corrective measures to combat these issues. As powerful as Kubernetes is, it requires an equally powerful skillset to set up and maintain the Kubernetes environment. With a solution like Qovery, you can take full advantage of Kubernetes without managing its complexities.

#About Qovery

Qovery makes it easy to set up, provision, and automatically tear down full fledge deployment environments On AWS. Qovery helps accelerate the deployment of your applications in Kubernetes clusters. It provides Kubernetes-empowered on-demand environments with built-in security, cost optimization, and governance.

To experience first-hand the power of "Qovery for Developers" product, start a 14-day free trial.

Sign–up here - no credit card required!

Your Favorite DevOps Automation Platform

Qovery is a DevOps Automation Platform Helping 200+ Organizations To Ship Faster and Eliminate DevOps Hiring Needs,

Try it out now!

Your Favorite DevOps Automation Platform

Qovery is a DevOps Automation Platform Helping 200+ Organizations To Ship Faster and Eliminate DevOps Hiring Needs,

Try it out now!